Edge computing is transforming the way data is being handled, processed, and delivered from millions of devices around the world. The explosive growth of internet-connected devices—the IoT—along with new applications that require real-time computing power, continues to drive edge-computing systems.

Faster networking technologies, such as 5G wireless, are allowing for edge computing systems to accelerate the creation or support of real-time applications, such as video processing and analytics, self-driving cars, artificial intelligence and robotics, to name a few.

While early goals of edge computing were to address the costs of bandwidth for data traveling long distances because of the growth of IoT-generated data, the rise of real-time applications that need processing at the edge is driving the technology ahead.

What is edge computing?

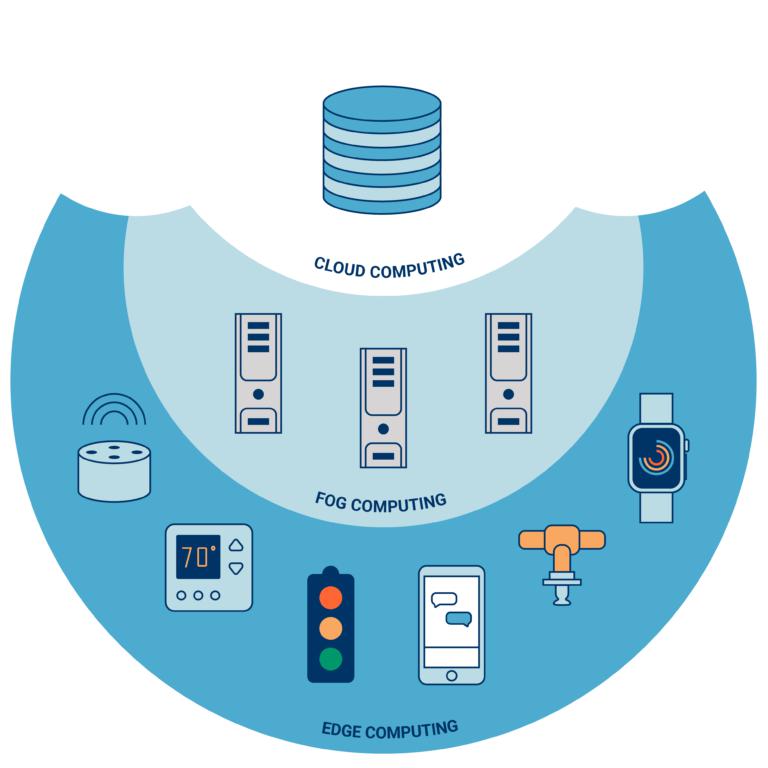

Gartner defines edge computing as “a part of a distributed computing topology in which information processing is located close to the edge—where things and people produce or consume that information.”

At its basic level, edge computing brings computation and data storage closer to the devices where it’s being gathered, rather than relying on a central location that can be thousands of miles away. This is done so that data, especially real-time data, does not suffer latency issues that can affect an application’s performance. In addition, companies can save money by having the processing done locally, reducing the amount of data that needs to be processed in a centralized or cloud-based location.

Edge computing was developed due to the exponential growth of IoT devices, which connect to the internet for either receiving information from the cloud or delivering data back to the cloud. And many IoT devices generate enormous amounts of data during the course of their operations.

Think about devices that monitor manufacturing equipment on a factory floor or an internet-connected video camera that sends live footage from a remote office. While a single device producing data can transmit it across a network quite easily, problems arise when the number of devices transmitting data at the same time grows. Instead of one video camera transmitting live footage, multiply that by hundreds or thousands of devices. Not only will quality suffer due to latency, but the costs in bandwidth can be tremendous.

Edge-computing hardware and services help solve this problem by being a local source of processing and storage for many of these systems. An edge gateway, for example, can process data from an edge device, and then send only the relevant data back through the cloud, reducing bandwidth needs. Or it can send data back to the edge device in the case of real-time application needs. (See also: Edge gateways are flexible, rugged IoT enablers)

These edge devices can include many different things, such as an IoT sensor, an employee’s notebook computer, their latest smartphone, the security camera or even the internet-connected microwave oven in the office break room. Edge gateways themselves are considered edge devices within an edge-computing infrastructure.

Edge computing use cases

There are as many different edge use cases as there are users – everyone’s arrangement will be different – but several industries have been particularly at the forefront of edge computing. Manufacturers and heavy industry use edge hardware as an enabler for delay-intolerant applications, keeping the processing power for things like automated coordination of heavy machinery on a factory floor close to where it’s needed. The edge also provides a way for those companies to integrate IoT applications like predictive maintenance close to the machines. Similarly, agricultural users can use edge computing as a collection layer for data from a wide range of connected devices, including soil and temperature sensors, combines and tractors, and more. (Read more about IoT on the farm: Drones and sensors for better yields)

The hardware required for different types of deployment will differ substantially. Industrial users, for example, will put a premium on reliability and low-latency, requiring ruggedized edge nodes that can operate in the harsh environment of a factory floor, and dedicated communication links (private 5G, dedicated Wi-Fi networks or even wired connections) to achieve their goals. Connected agriculture users, by contrast, will still require a rugged edge device to cope with outdoor deployment, but the connectivity piece could look quite different – low-latency might still be a requirement for coordinating the movement of heavy equipment, but environmental sensors are likely to have both higher range and lower data requirements – an LP-WAN connection, Sigfox or the like could be the best choice there.

Other use cases present different challenges entirely. Retailers can use edge nodes as an in-store clearinghouse for a host of different functionality, tying point-of-sale data together with targeted promotions, tracking foot traffic, and more for a unified store management application. The connectivity piece here could be simple – in-house Wi-Fi for every device – or more complex, with Bluetooth or other low-power connectivity servicing traffic tracking and promotional services, and Wi-Fi reserved for point-of-sale and self-checkout.

Edge equipment

The physical architecture of the edge can be complicated, but the basic idea is that client devices connect to a nearby edge module for more responsive processing and smoother operations. Terminology varies, so you’ll hear the modules called edge servers and “edge gateways,” among others.

DIY and service options

The way an edge system is purchased and deployed can also vary widely. On one end of the spectrum, a business might want to handle much of the process on their end. This would involve selecting edge devices, probably from a hardware vendor like Dell or HPE or IBM, architecting a network that’s adequate to the needs of the use case, and buying management and analysis software capable of doing what’s necessary. That’s a lot of work and would require a considerable amount of in-house expertise on the IT side, but it could still be an attractive option for a large organization that wants a fully customized edge deployment.

On the other end of the spectrum, vendors in particular verticals are increasingly marketing edge services that they manage. An organization that wants to take this option can simply ask a vendor to install its own equipment, software and networking and pay a regular fee for use and maintenance. IIoT offerings from companies like GE and Siemens fall into this category. This has the advantage of being easy and relatively headache-free in terms of deployment, but heavily managed services like this might not be available for every use case.

Benefits

For many companies, cost savings alone can be a driver to deploy edge-computing. Companies that initially embraced the cloud for many of their applications may have discovered that the costs in bandwidth were higher than expected and are looking to find a less expensive alternative. Edge computing might be a fit.

Increasingly, though, the biggest benefit of edge computing is the ability to process and store data faster, enabling for more efficient real-time applications that are critical to companies. Before edge computing, a smartphone scanning a person’s face for facial recognition would need to run the facial recognition algorithm through a cloud-based service, which would take a lot of time to process. With an edge computing model, the algorithm could run locally on an edge server or gateway, or even on the smartphone itself, given the increasing power of smartphones. Applications such as virtual and augmented reality, self-driving cars, smart cities and even building-automation systems require fast processing and response.

“Edge computing has evolved significantly from the days of isolated IT at ROBO [Remote Office Branch Office] locations,” says Kuba Stolarski, a research director at IDC, in the “Worldwide Edge Infrastructure (Compute and Storage) Forecast, 2019-2023” report. “With enhanced interconnectivity enabling improved edge access to more core applications, and with new IoT and industry-specific business use cases, edge infrastructure is poised to be one of the main growth engines in the server and storage market for the next decade and beyond.”

Companies such as Nvidia have recognized the need for more processing at the edge, which is why we’re seeing new system modules that include artificial intelligence functionality built into them. The company’s latest Jetson Xavier NX module, for example, is smaller than a credit card and can be built into devices such as drones, robots and medical devices. AI algorithms require large amounts of processing power, which is why most of them run via cloud services. The growth of AI chipsets that can handle processing at the edge will allow for better real-time responses within applications that need instant computing.

Privacy and security

From a security standpoint, data at the edge can be troublesome, especially when it’s being handled by different devices that might not be as secure as centralized or cloud-based systems. As the number of IoT devices grows, it’s imperative that IT understands the potential security issues and makes sure those systems can be secured. This includes encrypting data and employing access-control methods and possibly VPN tunneling.

Furthermore, differing device requirements for processing power, electricity and network connectivity can have an impact on the reliability of an edge device. This makes redundancy and failover management crucial for devices that process data at the edge to ensure that the data is delivered and processed correctly when a single node goes down.

Edge computing and 5G

Around the world, carriers are deploying 5G wireless technologies, which promise the benefits of high bandwidth and low latency for applications, enabling companies to go from a garden hose to a firehose with their data bandwidth. Instead of just offering the faster speeds and telling companies to continue processing data in the cloud, many carriers are working edge-computing strategies into their 5G deployments in order to offer faster real-time processing, especially for mobile devices, connected cars and self-driving cars.

Wireless carriers have begun rolling out licensed edge services for an even less hands-on option than managed hardware. The idea here is to have edge nodes live virtually at, say, a Verizon base station near the edge deployment, using 5G’s network slicing feature to carve out some spectrum for instant, no-installation-required connectivity. Verizon’s 5G Edge, AT&T’s Multi-Access Edge, and T-Mobile’s partnership with Lumen all represent this type of option.

Gartner’s 2021 strategic roadmap for edge computing highlights the continued industry interest in 5G for edge computing, saying that edge has become part and parcel of many 5G deployments. Partnerships between the cloud hyperscalers like Amazon and Microsoft and the major wireless ISPs will be key to realizing widespread uptake of this type of mobile-edge.

It’s clear that while the initial goal for edge computing was to reduce bandwidth costs for IoT devices over long distances, the growth of real-time applications that require local processing and storage capabilities will continue to drive the technology forward over the coming years.

Now see "How to determine if Wi-Fi 6 is right for you"

Join the Network World communities on Facebook and LinkedIn to comment on topics that are top of mind.